What AG-UI means for extra interactive agentic AI apps

AI brokers have solely gone from energy to energy within the final couple of years. And an enormous motive behind that’s AI agent protocols which have made it straightforward to sync brokers with numerous methods. Anthropic’s Mannequin Context Protocol (MCP) is a game-changer for connecting AI brokers with different instruments.

Nonetheless, there was nonetheless a significant hole in agentic AI development, which prevented AI brokers from connecting with an software’s frontend. The top that the customers see on their display screen. And it was holding again AI brokers from changing into full-scale apps. However that’s now additionally historical past as CopilotKit’s Agent-Person Interplay Protocol (AG-UI) makes brokers join with user-facing functions with ease.

AG-UI is large information for builders, enterprises, and customers as a result of it might flip brokers from background processes into one thing you see and work together with.

So, seize a cup of espresso as we are going to clarify what AG-UI is and why it might make AI agent-based apps extra interactive. However first, you’ll want to perceive some backdrop and what generative UI is.

Earlier than AG-UI: The issues of agent-user interactions

Okay, you received’t actually grasp the utility of AG-UI until you know the way issues labored within the previous days. Creating AI functions historically entails juggling between two duties always:

Now, this sounds easy, but it surely provides a number of complexity and additional work. The frontend staff has to take care of uncooked knowledge within the type of JSON responses and flip it into one thing a human might truly see and use.

That might imply including a button right here, a kind there, and perhaps a chart some other place. However they have to manually write the code for each single doable factor the AI would possibly need to present. This guide course of is painstakingly sluggish and requires fixed again–and-forth between the backend and frontend builders.

What’s generative UI?

The sensible resolution for these agent-user interplay issues is to let AI produce the UI it wants itself. Generative UI aka GenUI is an method which does that by permitting AI brokers create dynamic interfaces at runtime as an alternative of being designed solely upfront by individuals.

In a standard app development, builders resolve precisely what seems on the display screen and the way it’s organized forward of time. In GenUI, the agent helps resolve what to indicate, find out how to set up it, and generally even find out how to lay it out.

For instance, as an alternative of the agent giving some knowledge about a kind and ready for a developer to show that into one thing visible, the agent creates an precise, ready-to-display kind, and it simply seems on the display screen instantly.

Generative UI is a spectrum, and there are a lot of methods to go about it. Particularly, there are three strategies to construct a GenUI system relying on whether or not the UI is totally or partially created by the AI.

| Method | What it does | Execs and cons | Finest for |

| Static GenUI | Builders construct the elements forward of time, and AI solely fills in values like textual content, numbers, or knowledge | Very protected and simple to preserve, however not very versatile | Important product areas the place reliability issues most |

| Declarative GenUI | AI chooses and combines elements from a pre-approved set | Offers steadiness between flexibility and management. Nevertheless it requires effort to construct and preserve. | Most agentic apps |

| Open-ended GenUI | AI generates the precise interface code from scratch, reminiscent of HTML and CSS | Most versatile and artistic, but additionally the riskiest. It may create inconsistent design and introduce safety points | Prototyping, demos, and build-time scaffolding |

What’s AG-UI?

In comes AG-UI, developed by CopilotKit in Might 2025 as an open-source protocol that helps the entire ambit of GenUI strategies. Nevertheless it does so with the extra good thing about standardizing each communication between AI brokers and UIs.

It’s an event-based protocol for a real-time, two-way connection between the agent and an app’s frontend. AG-UI has shortly turn out to be the de facto commonplace for agent-user interactions. Google, Amazon, Microsoft, and LangChain are all utilizing it of their agentic AI merchandise.

AG-UI is the third main open agentic AI protocol that has generated a number of buzz. Collectively, these three protocols have accomplished the agentic AI tech stack builders must create agentic AI options in 2026.

| Protocol | Created by | Launch date | What it connects | Core objective |

| MCP | Anthropic | November 2024 | AI brokers ↔ exterior instruments | Lets brokers securely hook up with exterior sources to reinforce capabilities |

| A2A | April 2025 | AI brokers ↔ AI brokers | Defines how a number of AI brokers constructed in another way collaborate with one another | |

| AG-UI | CopilotKit | Might 2025 | AI brokers ↔ Person interactions | Allows reside, two-way interplay between the agent and the consumer interface |

How AG-UI truly works (for non-techies)

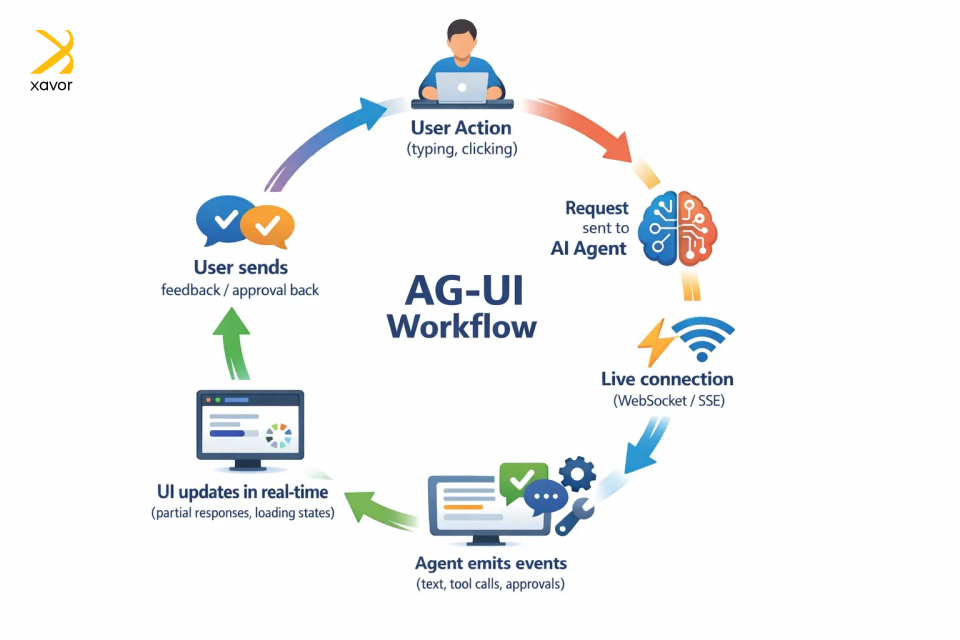

The subsequent few paragraphs will cowl the AG-UI workflow that makes all of this doable. AG‑UI sounds technical, and it’s, however the concept behind it’s surprisingly easy. We’ll attempt to make it as comprehensible for every kind of readers.

AG-UI works by conserving your app and the agent in a reside, two-way dialog as an alternative of the app sending a request after which ready silently for one ultimate reply.

Right here’s the entire workflow in easy phrases:

1. Beginning the interplay

All of it begins with the consumer taking any motion. Like typing a immediate, clicking a button, or importing a file, identical to in any app.

However behind the scenes, the app sends that request to an AI agent utilizing AG‑UI’s commonplace format so either side communicate the identical language.

2. Opening a reside connection

As a substitute of sending one reply and shutting the connection, AG‑UI retains a reside channel open between the agent and the app. That is totally different from common web or mobile apps that await a full response.

This reside connection is normally created by SSE or WebSockets. The channel stays open the complete time the agent is working, so each single factor the agent does will get delivered to your app the second it occurs.

3. Sending structured occasions

Because the agent works, it sends again small, well-defined updates as occasions. Every occasion represents a selected factor that occurred, reminiscent of:

- I generated some textual content

- I’m calling a software

- I want consumer approval

AG-UI has 16 varieties of standardized occasion varieties for real-time communication. The app listens to these occasions and updates the display screen immediately.

4. Updating the UI immediately

As a result of the app receives updates step‑by‑step, it might replace the display screen instantly. Which may imply exhibiting partial textual content, displaying progress, or reflecting a brand new motion with out ready for the total course of to complete.

From the consumer’s perspective, the AI feels alive and responsive, not caught or guessing.

5. Two-way communication

Communication isn’t one-sided. The frontend may ship data again whereas the agent continues to be operating, reminiscent of consumer approvals, additional enter, interface context, or a cancel request.

AG-UI vs A2UI: What’s the distinction?

AG-UI and A2UI are two similar-sounding, overlapping applied sciences within the AI agent ecosystem. However they serve totally different functions and are not even direct opponents. Fairly the opposite, AGUI and A2UI are complementary applied sciences that can be utilized collectively to create interactive AI apps.

A2UI (Agent-to-Person Interface) is a declarative GenUI specification developed by Google. The main target right here is that it’s a generative UI spec, not a protocol like AG-UI. Which means an A2UI doesn’t generate uncooked HTML or JavaScript to construct the interface immediately.

As a substitute of writing UI code, A2UI describes what to render by a JSON description, which is basically a structured observe that provides a clear description of what ought to seem and what it incorporates.

Then every platform takes that description and builds the UI its personal method, utilizing its personal native elements. React renders it with React widgets. Flutter renders it with Flutter widgets. SwiftUI with SwiftUI.

AG-UI is the transport protocol that carries A2UI because the content material. That’s the reason they’re associated however not competing. AG-UI can transport many sorts of interplay knowledge, and it might work with a number of generative UI specs, together with A2UI, MCP-UI, and Open-JSON-UI.

| AG-UI | A2UI | |

| Goal | GenUI specification | Agentic AI protocol |

| Focus | UI rendering | Two-way communication |

| Solutions | “What to render?” | “How agent and app work together?” |

| Cross-platform | Sure | Platform agnostic |

| Developer | CopilotKit |

The impression of AG-UI on agentic AI functions

AG-UI is a significant juncture in AI-powered app growth, and we don’t suppose sufficient individuals are speaking about it but. Nicely, at the very least not within the non-tech world, as a result of in Silicon Valley, it’s the speak of the city.

Proper now, AI agent-based apps are very good and highly effective, however interactivity isn’t their forte. And for those who actually need to construct an AI agent that truly does issues on display screen, it requires doing a ton of issues.

However AG-UI is a frequent language between brokers and consumer interfaces. Any agent constructed on AG-UI can plug into any supported app out of the field. This has profound implications for builders, enterprises, and customers alike.

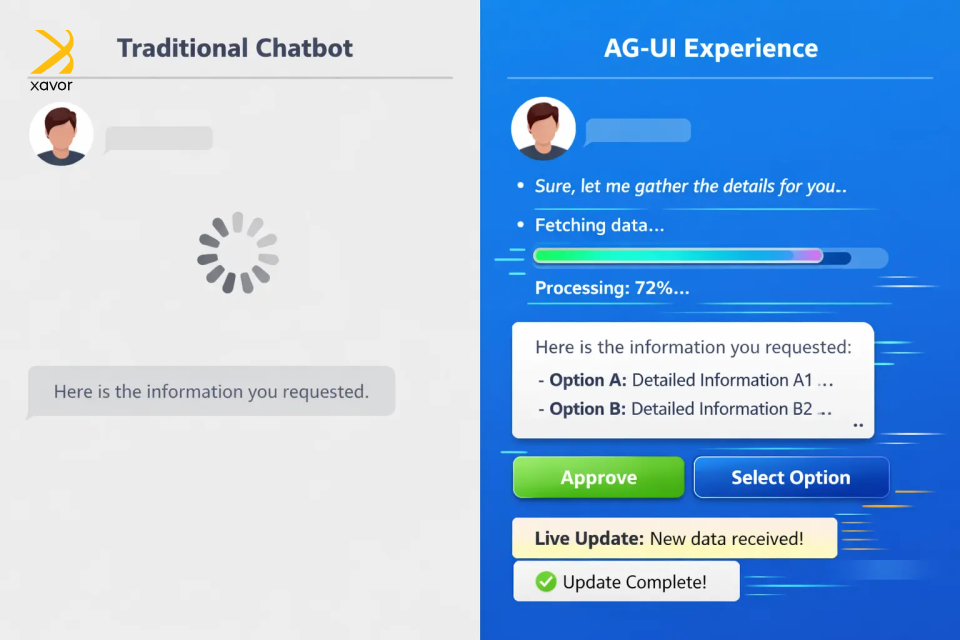

1. It makes AI really responsive and interactive

In a fundamental chatbot expertise, the consumer asks for one thing, the interface goes quiet, after which a ultimate reply seems. Even when the AI is doing helpful work within the background, the consumer can not see what is going on. That usually makes the expertise really feel sluggish and a bit untrustworthy. Folks begin questioning whether or not the agent continues to be working or is caught.

AG-UI helps remedy that by making the AI really feel extra current throughout the job. As a substitute of hiding the method, the app can present helpful progress updates on the go. That visibility issues as a result of customers are not ready blindly. They will see that the system is actively shifting by steps and dealing towards an consequence.

However the greater shift is that AG-UI additionally lets customers work together with the method itself. The app can current buttons, varieties, picks, or approval actions on the proper second. So as an alternative of rewriting the identical instruction in textual content, the consumer can merely click on Approve, select an possibility, or fill in a discipline.

That mixture is what makes the expertise really feel way more pure. The AI is not only replying on the finish of a job. It is exhibiting what it’s doing, asking for enter when wanted, and letting the consumer information the method in a clearer method.

AG-UI makes AI really feel much less like a black field chatbot and extra like an lively assistant customers can truly work with.

2. It’s the trail in direction of full-fledged AI apps

At the moment, AI in an app is nearly at all times subsequent to your instruments, by no means inside them. You’d ask it one thing, copy the reply, and paste it some other place. That hole has been a persistent drawback for builders and customers.

Actual merchandise don’t work like this. A dashboard has reside knowledge that updates. A workflow has steps, approvals, and a state. A software remembers what you probably did final, responds to your clicks, and adjustments based mostly in your enter. These reside interfaces, and till now, AI couldn’t actually take part in them.

AG-UI adjustments the connection. It provides brokers a standardized method to push updates, react to consumer actions, and keep in sync with what’s taking place on display screen in actual time. The agent is current contained in the product, conscious of context, and able to doing issues the place they really need to occur.

Work and intelligence occupy the identical area. That’s the hole AG-UI closes and turns AI right into a succesful participant embedded in how work truly flows.

3. It has accomplished the agentic AI toolkit

Builders have been duct-taping issues collectively for years. AG-UI is a large deal for builders as a result of it provides them an ordinary method to join agent backends to user-facing apps. It was the ultimate trick lacking within the repertoire of agentic AI growth.

AG-UI issues for builders in three sensible methods. First, it reduces fragmentation since an agent that “speaks” AG-UI can work with AG-UI-compatible shoppers, which makes integration extra reusable and fewer customized.

Second, it makes richer UX patterns simpler to construct as a result of the protocol already facilities on structured occasions, streaming, and state as an alternative of plain textual content replies. And third, it helps options builders normally should invent themselves, like shared state, human-in-the-loop approvals, and predictive state updates.

All of this has compounding results for enterprises as properly. In truth, the worth is even greater for companies as a result of AG-UI helps make AI match as a actual enterprise software program. Microsoft explicitly highlights approval workflows for delicate actions, real-time suggestions, and synchronized state for interactive experiences. That’s necessary in enterprise settings the place customers want visibility and management.

Conclusion

Main tech breakthroughs have at all times had a ripple impact on consumer interface design. Net 2.0 launched extra interactive, dynamic UIs the place customers might click on round to discover. It was a large enchancment from the boring static interfaces of the early days of the web.

AI can be opening the door for the longer term age of consumer interactions. However this time, it can turn out to be extra adaptive with AG-UI. As a substitute of solely reacting to clicks, AG-UI can reply to the consumer’s intent, context, and form the expertise in actual time. This can successfully make UI part of the system’s output.

This piece was simply our perspective on what your product might appear to be if AI might actively take part in UI design as an alternative of simply supporting it. There’s a lot extra to share as Xavor’s agentic AI providers are engaged on AG-UI, MCP, A2UI, and different rising applied sciences to create agent-native merchandise.

Contact us at [email protected] if you wish to talk about your AI agent undertaking. Our agentic AI consultants will assist architect and implement AI methods that combine immediately into your workflows and merchandise.

FAQs

AG-UI is used to attach AI brokers with user-facing functions in actual time. It lets apps stream agent updates, present interactive UI components, accumulate consumer enter or approvals, and maintain the frontend and agent in sync throughout a job.

MCP UI focuses on what interface elements an AI can expose by MCP-connected instruments. AG-UI focuses on how the agent and the frontend talk in actual time, together with streaming updates, approvals, and shared state throughout the interplay.

Generative UI creates interface components dynamically at runtime as a part of the product expertise. AI-assisted design, in contrast, helps people design interfaces sooner, however the ultimate UI continues to be normally reviewed and constructed upfront.

Source link

latest video

latest pick

news via inbox

Nulla turp dis cursus. Integer liberos euismod pretium faucibua