The Strongest AI Mannequin You Can Practice on a Laptop computer in 5 Minutes

Query:

What’s essentially the most highly effective AI mannequin you possibly can practice on a MacBook Professional in simply 5 minutes?

Quick reply:

The most effective I managed was a ~1.8M parameter GPT-style transformer, educated on ~20M TinyStories tokens. It reached a perplexity of ~9.6 on a held-out break up.

Instance output (immediate in daring):

As soon as upon a time, there was slightly boy named Tim. Tim had a small field that he appreciated to play with. He would push the field to open. At some point, he discovered an enormous crimson ball in his yard. Tim was so completely happy. He picked it up and confirmed it to his pal, Jane. “Take a look at my bag! I would like it!” she mentioned. They performed with the ball all day and had a good time.

Not precisely Shakespeare, however not unhealthy for 5 minutes.

The Problem

This was largely a enjoyable, curiosity-driven experiment — and possibly slightly foolish — for 2 causes:

- In case you can afford a MacBook Professional, you may simply hire half-hour on an H100 GPU and practice one thing vastly stronger.

- In case you’re caught with a laptop computer, there’s no actual motive to restrict coaching to 5 minutes.

That mentioned, constraints breed creativity. The aim: practice the very best language mannequin in simply 5 minutes of compute time.

Key Limitation: Tokens per Second

5 minutes isn’t lengthy sufficient to push many tokens by way of a mannequin, so:

- Giant fashions are out — they’re too sluggish per token.

- Tiny fashions practice rapidly, however can’t be taught a lot.

It’s a balancing act: higher to coach a 1M-parameter mannequin on tens of millions of tokens than a billion-parameter mannequin on a number of thousand.

Efficiency Optimization

Preliminary transformer coaching on Apple’s MPS backend hit ~3,000 tokens/sec.

Surprisingly:

- torch.compile, float16, and different math tweaks didn’t assist.

- Gradient accumulation made issues slower (launch overhead was the true bottleneck).

- Switching from PyTorch to MLX gave no significant enhance.

Finest practices for this scale:

- Use MPS

- Skip compilation/quantization

- Keep away from gradient accumulation

- Maintain the mannequin small

Selecting the Proper Dataset

With ~10M tokens (~50MB textual content), dataset selection issues.

-

Easy English Wikipedia was an okay begin, however output was fact-heavy and noun-obsessed.

-

TinyStories — artificial, quick, 4-year-old-level tales — labored much better:

- Coherent narratives

- Trigger-and-effect logic

- Minimal correct nouns

- Easy grammar

Good for small language fashions.

Tokenization

Tokenizer coaching wasn’t counted within the five-minute funds. At this scale:

- Tokenization overhead is negligible.

- Multi-byte tokens are simpler for small fashions to be taught than uncooked characters.

Structure Experiments

Transformers

- GPT-2 type was the default selection.

- SwiGLU activation gave a lift.

- 2–3 layers labored greatest.

- Studying price: 0.001–0.002 was optimum for quick convergence.

- Positional embeddings outperformed RoPE.

LSTMs

- Related construction, however barely worse perplexity than transformers.

Diffusion Fashions

- Tried D3PM language diffusion — outcomes had been unusable, producing random tokens.

- Transformers & LSTMs reached grammatical output inside a minute; diffusion didn’t.

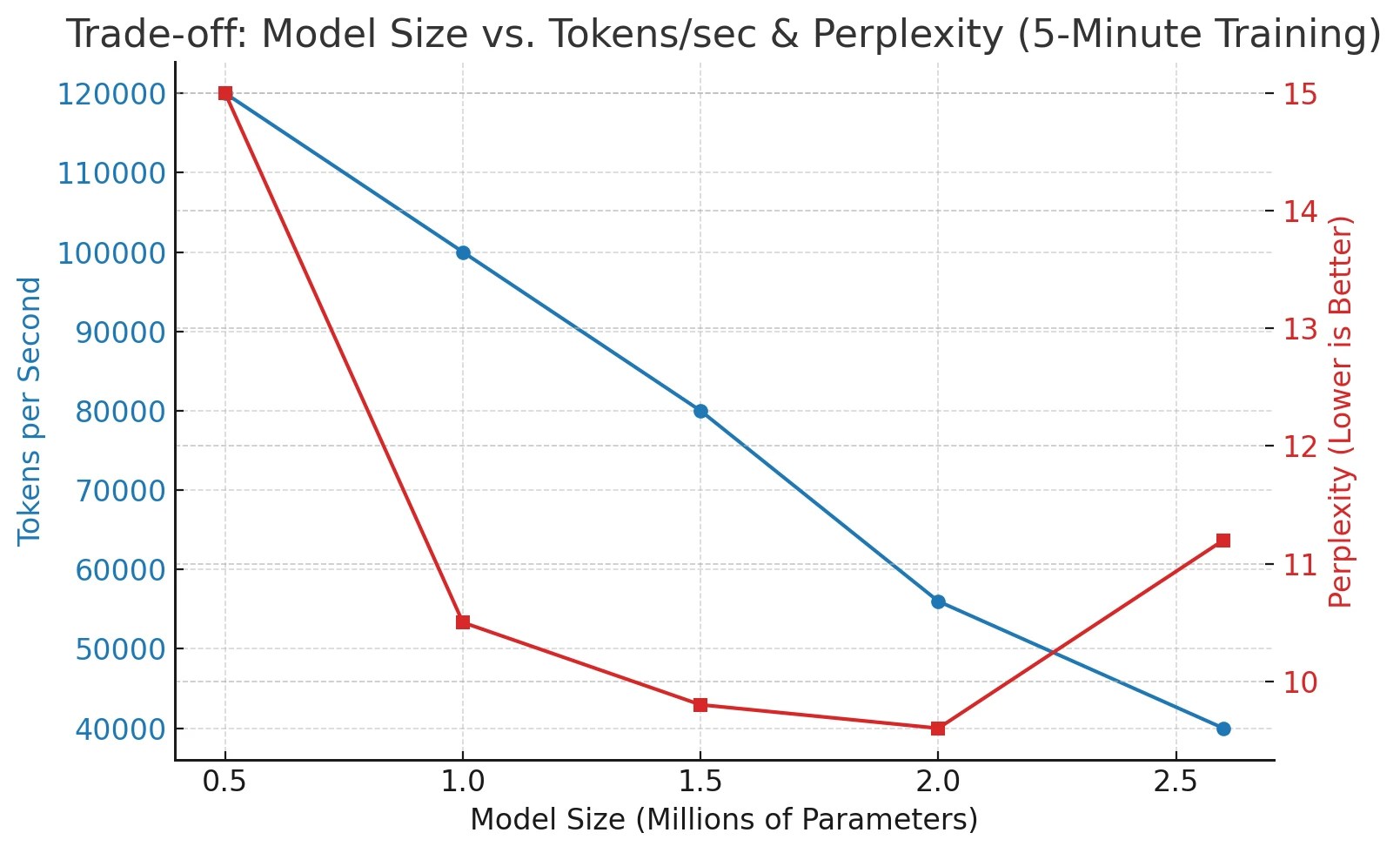

Discovering the Candy Spot in Mannequin Dimension

Experimenting with sizes revealed:

- ~2M parameters was the higher sensible restrict.

- Any larger: too sluggish to converge in 5 minutes.

- Any smaller: plateaued too early.

This lined up with the Chinchilla scaling legal guidelines, which relate optimum mannequin measurement to coaching tokens.

Closing Ideas

This experiment gained’t change the way forward for AI coaching — most attention-grabbing conduct occurs after 5 minutes. But it surely was:

- A good way to discover tiny-model coaching dynamics

- A enjoyable check of laptop computer GPU capabilities

- Proof which you could get a coherent storytelling mannequin in 5 minutes

With higher architectures and sooner shopper GPUs, we’d ultimately see surprisingly succesful fashions educated in minutes — proper from a laptop computer.

In case you might have discovered a mistake within the textual content, please ship a message to the creator by choosing the error and urgent Ctrl-Enter.

Source link

latest video

latest pick

news via inbox

Nulla turp dis cursus. Integer liberos euismod pretium faucibua